The Maker Faire was excellent, even if there was a significant hardware failure that took the phone off-line for part of Saturday and all of Sunday.

It was too noisy to even attempt to play Zork, so I had prepared the framework so it could run a much simpler game called Moonglow, written by Dave Bernazzani in 2004. It was designed to fit in a very small program, and as a result has a vocabulary of only ~70 words, rather than more than 650 for Zork. That worked well enough in testing and at the preview on Friday afternoon, but Saturday was  significantly more crowded, and therefore noisy. The game was mostly unplayable. So Friday afternoon I hacked up a new game that only requires yes or no answers.  It was suggested by my old friend Kevin Savetz, and inspired by the classic BASIC game animal. It asks a series of yes or no questions in an attempt to guess what animal you’re thinking of.  If it fails, it asks you to contribute a new question that would have helped distinguish the new animal from the old.  Adding new questionss was too challenging for the STT engine in such a noisy environment, so I didn’t allow updates. But with a pre-built database of 70ish animals and nearly as many questions, it did okay… for a few hours until the computer died late Saturday.

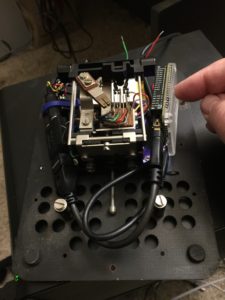

It had been having increasing problems with packet loss (30%) on the little WiFi network I had set up, so I attempted to restart the wlan interface, but that wedged. Â Attempting to reboot the whole computer yielded a unbootable computer. Â I had a spare computer with me, but the software backups were A) several days old and B) source only. Â So I reconstructed as much of the software as I could but recompiling on the chip computer itself took FOREVER. Â I probably should have worked out a cross compile environment, but I didn’t expect a single FST library file to take ~20 hours to compile. Â It spent most of that time swapping hard because the compiler was taking 650MB of virtual memory, and the poor little chip only has 512MB of RAM.

Despite the computer eating itself, I ended up getting an award.